At its Cloud Data Summit, Google today announced the preview launch of BigLake, a new data lake storage engine that makes it easier for enterprises to analyze the data in their data warehouses and data lakes.

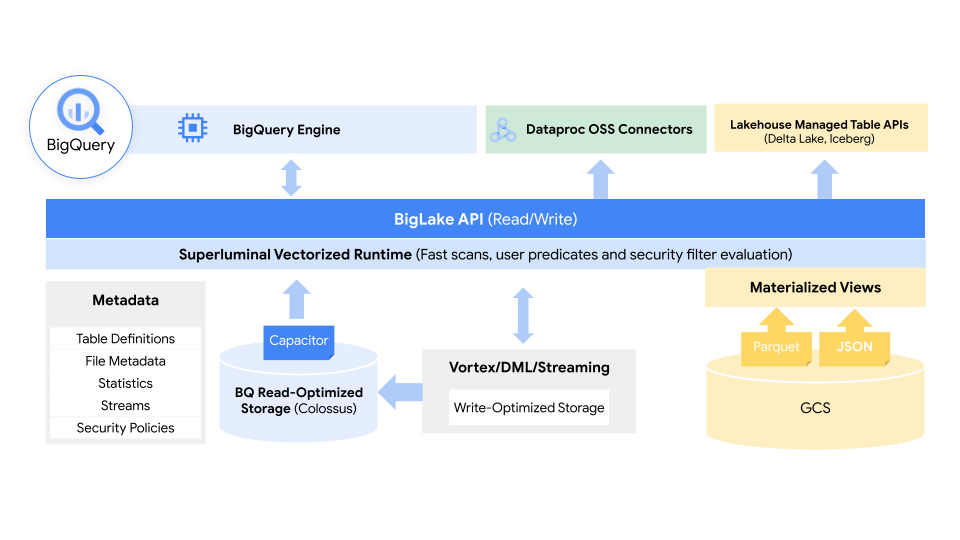

The idea here, at its core, is to take Google’s experience with running and managing its BigQuery data warehouse and extend it to data lakes on Google Cloud Storage, combining the best of data lakes and warehouses into a single service that abstracts away the underlying storage formats and systems.

This data, it’s worth noting, could sit in BigQuery or live on AWS S3 and Azure Data Lake Storage Gen2, too. Through BigLake, developers will get access to one uniform storage engine and the ability to query the underlying data stores through a single system without the need to move or duplicate data.

“Managing data across disparate lakes and warehouses creates silos and increases risk and cost, especially when data needs to be moved,” explains Gerrit Kazmaier, VP and GM of Databases, Data Analytics and Business Intelligence at Google Cloud, notes in today’s announcement. “BigLake allows companies to unify their data warehouses and lakes to analyze data without worrying about the underlying storage format or system, which eliminates the need to duplicate or move data from a source and reduces cost and inefficiencies.”

Using policy tags, BigLake allows admins to configure their security policies at the table, row and column level. This includes data stored in Google Cloud Storage, as well as the two supported third-party systems, where BigQuery Omni, Google’s multi-cloud analytics service, enables these security controls. Those security controls then also ensure that only the right data flows into tools like Spark, Presto, Trino and TensorFlow. The service also integrates with Google’s Dataplex tool to provide additional data management capabilities.

Google notes that BigLake will provide fine-grained access controls and that its API will span Google Cloud, as well as file formats like the open column-oriented Apache Parquet and open-source processing engines like Apache Spark.

“The volume of valuable data that organizations have to manage and analyze is growing at an incredible rate,” Google Cloud software engineer Justin Levandoski and product manager Gaurav Saxena explain in today’s announcement. “This data is increasingly distributed across many locations, including data warehouses, data lakes, and NoSQL stores. As an organization’s data gets more complex and proliferates across disparate data environments, silos emerge, creating increased risk and cost, especially when that data needs to be moved. Our customers have made it clear; they need help.”

In addition to BigLake, Google also today announced that Spanner, its globally distributed SQL database, will soon get a new feature called “change streams.” With these, users can easily track any changes to a database in real time, be those inserts, updates or deletes. “This ensures customers always have access to the freshest data as they can easily replicate changes from Spanner to BigQuery for real-time analytics, trigger downstream application behavior using Pub/Sub, or store changes in Google Cloud Storage (GCS) for compliance,” explains Kazmaier.

Google Cloud also today brought Vertex AI Workbench, a tool for managing the entire lifecycle of a data science project, out of beta and into general availability, and launched Connected Sheets for Looker, as well as the ability to access Looker data models in its Data Studio BI tool.

Read the original article @ TechCrunch