Many people likely know AWS as the world’s largest provider of cloud computing services. But far fewer people think of the Amazon subsidiary as a supercomputing heavyweight.

Predominantly, that’s because AWS is happy to operate at the less sexy end of the high-performance computing (HPC) spectrum, far from the shiny proof-of-concept systems that decorate the summit of the Top 500 rankings.

Instead, the organization is concerned with democratizing access to supercomputing resources, by making available cloud-based services that a large number of companies and academic institutions can access.

One of the people responsible for delivering on this objective is Brendan Bouffler, known to some as “Boof”, who in his role as Head of Developer Relations for HPC acts as the intermediary between customers and the AWS engineering team.

As someone with years of experience building supercomputers, he contends that, counter-intuitively, it is often the smaller-scale machines that have the greatest impact, because raw performance is not necessarily the most important metric.

“It is fun to design really big machines, because it’s a complex mathematical problem you have to dismantle,” he told us. “But I always got more joy out of building the smaller systems, because that’s where the largest amount of science is done.”

The epiphany at AWS was that this approach to HPC, whereby productivity takes precedence over performance, could be transplanted effectively into the cloud.

HPC in the cloud

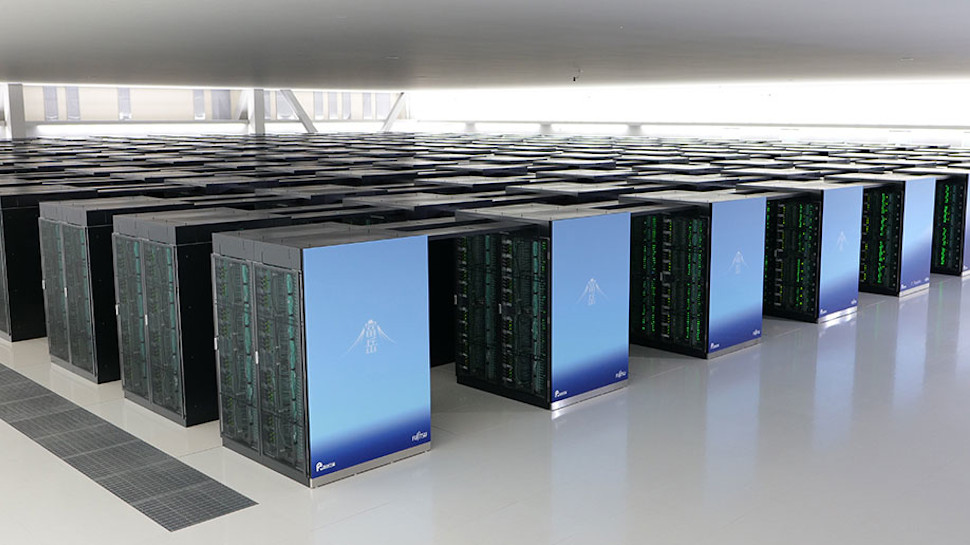

Although large-scale supercomputers like Fugaku, which currently tops the performance rankings, are excellent examples of how far the latest hardware can be pushed, these systems are curiosities first and utilities only second.

As Bouffler explains, the fundamental problem with large on-premise machines is ease of access. A system capable of breaking the exascale barrier would be an impressive feat of engineering, but of diminished practical use if researchers must queue for weeks to use it.

“A lot of people who build supercomputers, myself included, fall into the trap of worrying about squeezing out an additional 1% of performance. It’s laudable on one level, but the obsession means it’s easy to miss the low-hanging fruit,” Bouffler told us.

“What’s more important is the cadence of research; that’s actually where the opportunity is for the scientific community.”

As such, the AWS approach is as much about availability and elasticity as it is performance. With the company’s as-a-service offerings, customers are able to launch their HPC workloads instantaneously in the cloud and scale the allocated resources up or down as required, all but eliminating wastage.

“It’s about creating highly equitable access,” said Bouffler. “If you’ve got the budget and desire to solve a problem, you’ve got the computing resources you need.”

The benefits of such a system have been particularly evident since the start of the pandemic, during which companies like Moderna and AstraZeneca have used AWS instances for the purposes of vaccine development.

According to Bouffler, the world may not have a vaccine today (let alone multiple) without cloud-based HPC, which allowed for research to be kickstarted swiftly and scaled up at a moment’s notice.

“The researchers we worked with wanted flexibility and raw capacity on tap. If you make computing invisible and put the power in the hands of the people with the smart ideas, they can do really powerful things.”

Our data center, our silicon, our rules

Bouffler is the first to admit that the HPC community doesn’t pay a great deal of attention to what’s going on inside AWS. But he insists there is plenty of innovation coming out of the organization.

Historically, for example, cloud-based instances have excelled at running so-called “embarrassingly parallel” workloads that can be divided easily into a high volume of distinct tasks, but perform less well when communication between nodes is required.

Instead of bringing InfiniBand to the cloud, AWS came up with a different way to solve the problem. The company developed a technology called Elastic Fabric Adapter (EFA), which supposedly enables application performance on-par with on-premise HPC clusters for complex workloads like machine learning and fluid dynamics simulation.

Unlike InfiniBand, which fires all data packets from A to B down the fastest possible route, EFA spreads the packets thinly across the entire network.

“We had to find a way of running HPC in the cloud, but didn’t want to go and make the cloud look like an HPC cluster. Instead, we decided to redesign the HPC fabric to take advantage of the attributes of the cloud,” Bouffler explained.

“EFA sprays the packets like a swarm across virtually all pathways at once, which yields as good, if not better, performance. The scaling doesn’t stop when the network gets congested, either; the system assumes there is congestion from the outset, so the performance remains flat even as the HPC job gets larger.”

In 2018, meanwhile, AWS announced it would begin developing its own custom Arm-based server processor, called Graviton. Although not geared exclusively towards HPC use cases, the Graviton series has opened a number of doors for AWS, because it allowed the company to rip out all the features that weren’t essential to its needs and double down on those that were.

“When you’re designing something as big as a cloud, you have to assume things are going to fail,” said Bouffler. “Generally speaking, removing unnecessary features means you have much closer control over the failure profile, and having control over the silicon has given us a similar advantage.”

“Graviton3 is optimized up the wazoo for our data centers, because we’re the only customer for these things. We know what our conditions are, whereas other manufacturers have to support the most weird and unusual data center configurations.”

At AWS re:Invent last year, attended by TechRadar Pro, the company launched new EC2 instances powered by Graviton3, which is said to deliver up to 25% better compute performance and 60% better power efficiency than the previous generation, at least in some scenarios.

There are also a number of HPC-centric features built into Graviton3, such as 300GB/sec memory bandwidth, that typical enterprise workloads would never stretch to the limit, Bouffler explained. “We’re pushing in every direction for HPC, that’s what we always do.”

The more HPC, the merrier

Asked where AWS will take its HPC services next, Bouffler quoted a favorite saying of Jeff Besoz: “No customer has ever asked for less variety and higher prices”.

Moving forward, then, Bouffler and his team will continue to sound out customers and work to offer a wider variety of instances to address their specific needs, with a wider range of hardware options.

Another focus will be on bringing down the cost of running HPC workloads in the cloud. With this objective in mind, AWS launched a new AMD EPYC Milan-based EC2 instance in January called Hpc6a, which is two-thirds cheaper than the nearest comparable x86-based equivalent. Bouffler says AWS did “all sorts of nutty things” to help bring down the cost.

It’s not just about academic and scientific use cases, either. AWS is working with a diverse range of companies, from Western Digital to Formula One, to help accelerate product design, and hopes to expand into a deeper range of industries in future.

“We’re getting HPC into every nook and cranny of the economy,” added Bouffler. “And the more the merrier.”

- Also check out our lists of the best bare metal hosting and best dedicated server hosting services around

Read the original article @ TechRadar – All the latest technology news